This content originally appeared on HackerNoon and was authored by Alaa Eddine Boubekeur

ChatPlus a Great PWA for Chatting 💬✨🤩

ChatPlus is a progressive web app developped with React, NodeJS, Firebase and other services.

You can Talk with all your friends in real time 🗣️✨🧑🤝🧑❤️

You can call your friends and have video and audio calls with them 🎥🔉🤩

Send images to your friends and also audio messages and you have an AI that converts your speech to text whether you speak french, english or spanish 🤖✨

The web app can be installed on any devices and can receive notifications ⬇️🔔🎉

I would appreciate your support so much, leave us a star on Github repository and share with your friends ⭐✨

Check out this Github repository for full installation and deployment documentation: https://github.com/aladinyo/ChatPlus

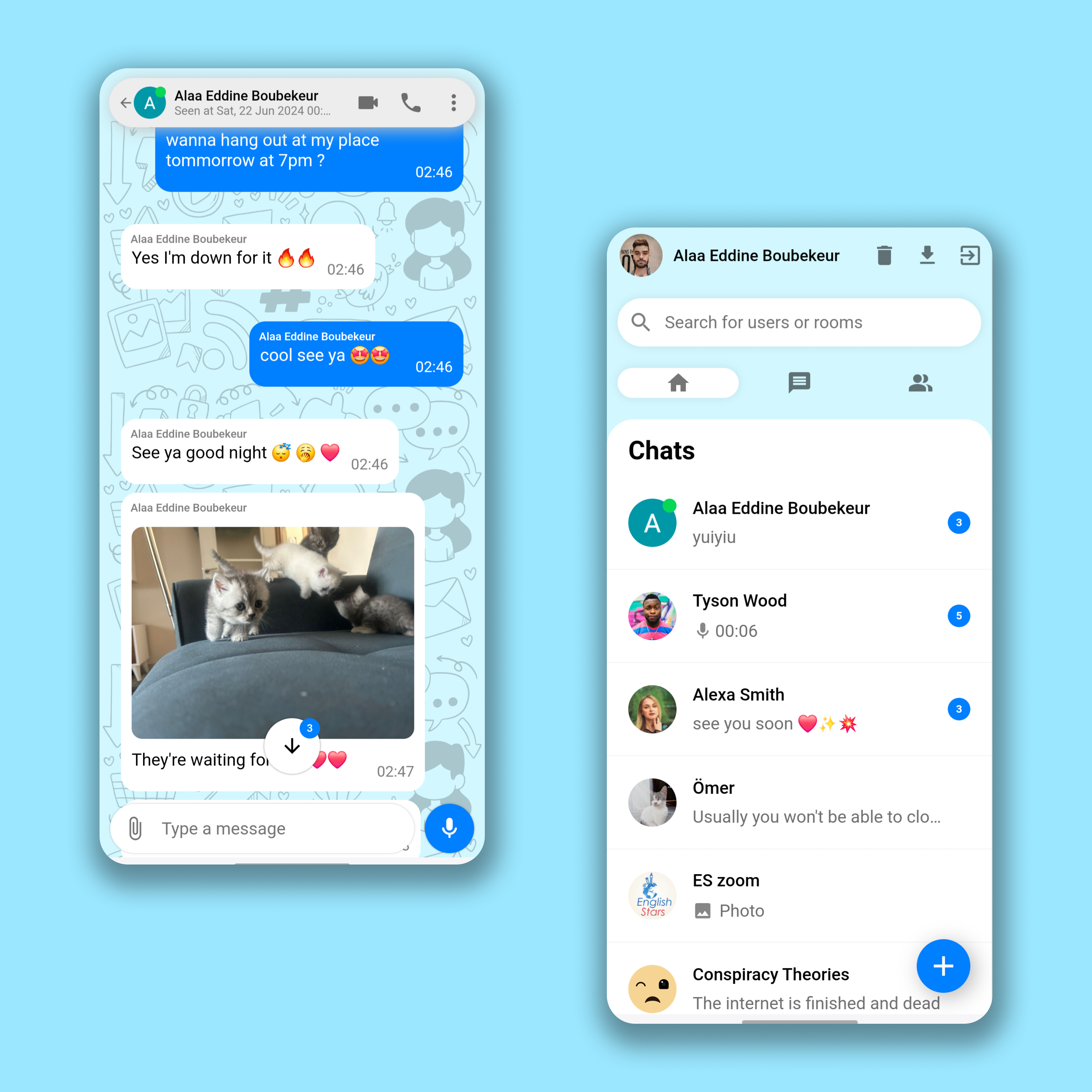

Clean Simple UI & UX

\

\

So What is ChatPlus ?

ChatPlus is one of the greatest applications I made, it attracts the attention with its vibrant user interface and multiple messaging and call functionalities which are all implemented on the web, it gives a cross platform experience as its frontend is designed to be a PWA application which has the ability to be installed everywhere and act like a standalone app, with its feature like push notifications, ChatPlus is the definition of a lightweight app that can give the full features of a mobile app with web technologies.

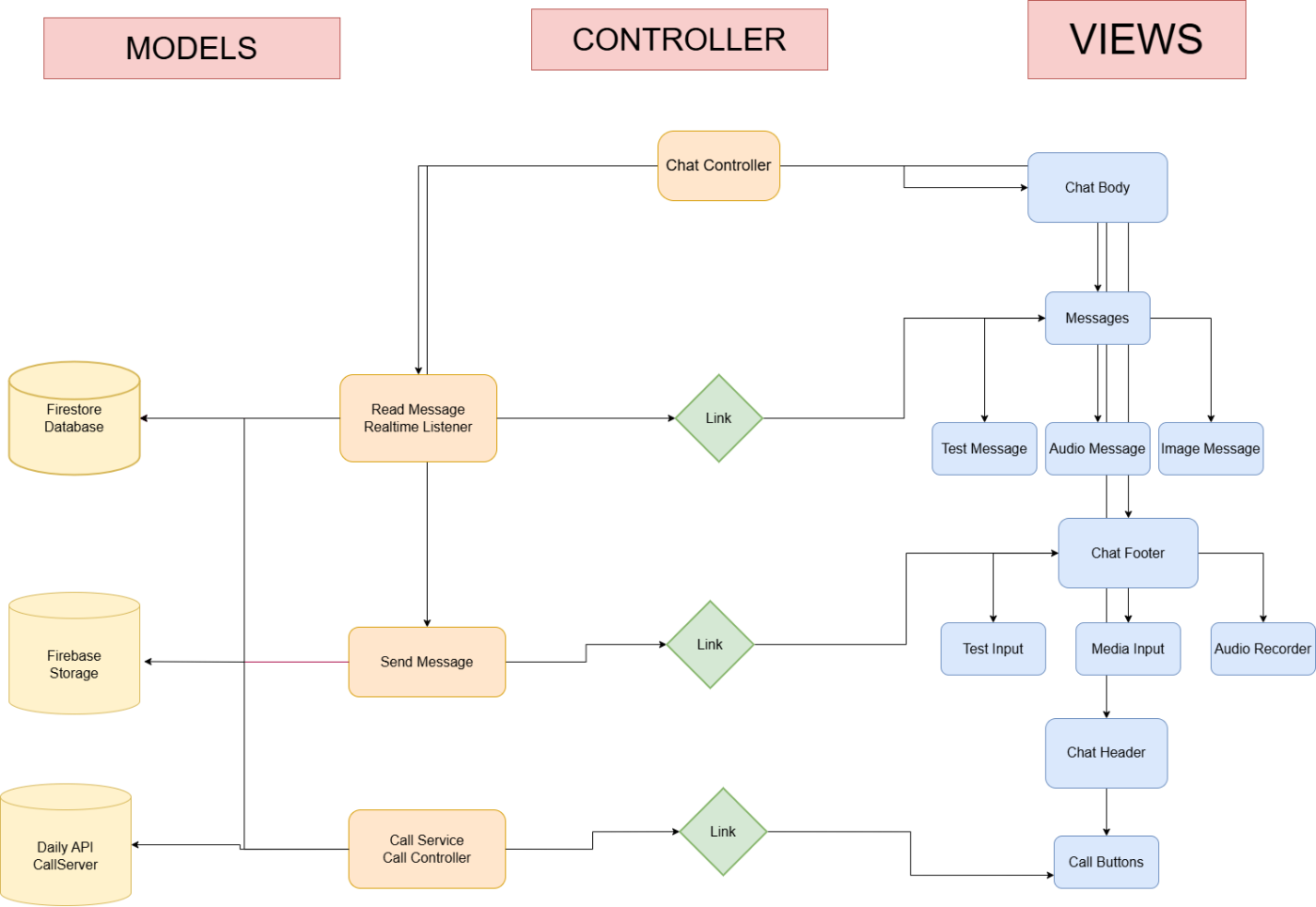

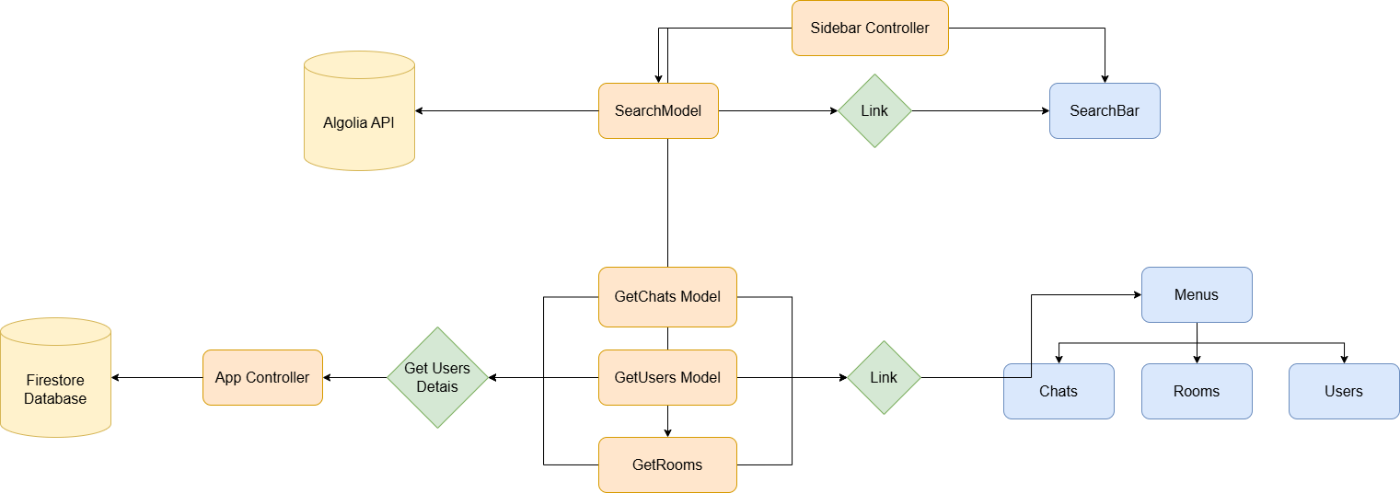

Software Architecture

Our app follows the MVC software architecture, MVC (Model-View-Controller) is a pattern in software design commonly used to implement user interfaces, data, and controlling logic. It emphasizes a separation between the software's business logic and display. This "separation of concerns" provides for a better division of labor and improved maintenance.

User interface layer (View)

It consists of multiple React interface components:

MaterialUI: Material UI is an open-source React component library that implements Google's Material Design. It's comprehensive and can be used in production out of the box, we’re gonna use it for icons buttons and some elements in out interface like an audio slider.

LoginView: A simple login view that allows the user to put their username and password or login with google.

SidebarViews: multiple view components like ‘sidebarheader’, ‘sidebarsearch’, ‘sidebar menu’ and ‘SidebarChats’, it consists of a header for user information, a search bar for searching users, a sidebar menu to navigate between your chats, your groups and users, and a SidebarChats component to display all your recently chats that you messaged, it shows the user name and photo and the last message.

ChatViews: it consists of many components as follow:

1.‘chat__header’ that contains the information of the user you’re talking to, his online status, profile picture and it displays buttons for audio call and video call, it also displays whethere the user is typing.

2.’chat__body--container’ it contains the informations of our messages with the other users it has component of messages which displays text messages, images with their message and also audio messages with their information like audio time and whether the audio was played and at the end of this component we have the ‘seen’ element that displays whether messages were seen.

3.‘AudioPlayer’: a React component that can display to us an audio with a slider to navigate it, displays the full time and current time of the audio, this view component is loaded inside ‘chat__body-- container’.

4.‘ChatFooter’: it contains an input to type a message a button to send a message when type on the input otherwise the button will allow you to record the audio, a button to import images and files.

5.’MediaPreview’: a React components that allows to preview the images or files we have selected to send in our chat, they are displayed on a carousel and user can slide the images or files and type a specific message for each one

6.‘ImagePreview’: When we have images sent on our chat this component will display the images on full screen with a smooth animation, component mounts after clicking on an image.

scalePage: a view function that increases the size of our web app when displayed on a large screens like full HD screens and 4K screens.

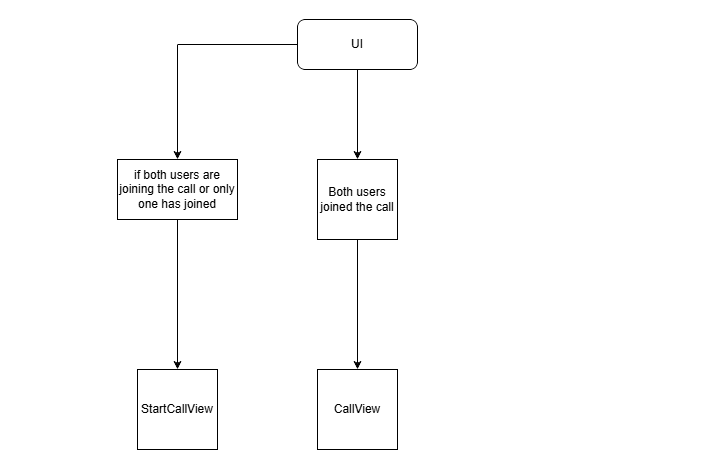

CallViews: a bunch of react components that constain all calls view element, they have the capability to be draged all over our screen and it consists of:

1 ‘Buttons’: a call button with a red version of it and a green video call button.

2 ‘AudioCallView’: a view component that allows to answer incoming audio call and display the call with a timer and it allows to cancel the call.

3 ‘StartVideoCallView’: a view component that display a video of ourselves by connecting to the local MediaAPI and it waits for the other user to accept the call or, it displays a button for us to answer an incoming video call.

4 ‘VideoCallView’: a view component that displays a video of us and the other user it allows to switch cameras, disable camera and audio, it can also go fullscreen.

RouteViews: React components that contains all of our views in order to create local components navigation, we got ‘VideoCallRoute’, ‘SideBarMenuRoute’ and ‘ChatsRoute’

Client Side Models (Model)

Client side models are the logic that allows our frontend to interact with databases and multiple local and serverside APIs and it consists of:

- Firebase SDK: It’s an SDK that is used to build the database of our web app.

- AppModel: A model that generates a user after authenticating and it also makes sure that we have the latest version of our web assets.

- ChatModels: it consists of model logics of sending messages to the database, establishing listeners to listen to new messages, listening to whether the other user is online and whether he’s typing, it also sends our media like images and audios to the database storage. SidebarChatsModel: Logic that listens to the latest messages of users and gives us an array of all your new messages from users, it also gives number of unread messages and online status of users, it also organize the users based on time of last message.

- UsersSearchModel: Logic that searchs for users on our database, it uses algolia search that has a list of our users by linking it to our database on the server

- CallModel: Logic that uses the Daily SDK to create a call on our web app and also send the data to our server and interacts with DailyAPI.

Client Side Controllers (Controller)

It consists of React components that link our views with their specific models:

- App Controller: Links the authenticated user to all components and runs the scalePage function to adjust the size of our app, it also loads firebase and attaches all the components, we can consider it a wrapper to our components.

- SideBarController: Link users data and list of his latest chats, it also links our menus with their model logic, it also link the search bar with algolia search API.

- ChatController: this is a very big controller that link most of the messaging and chat features.

- CallController: Links the call model with its views.

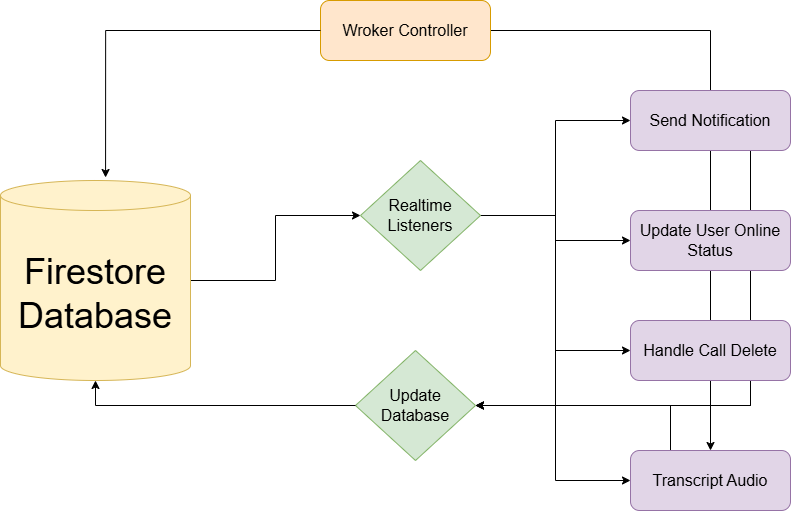

Server Side Model

Not all features are done on the frontend as the SDKs we used require some server side functionalities and they consist of:

- CallServerModel: Logic that allows us to create rooms for calls by interacting with Daily API and updating our firestore database.

- TranscriptModel: Logic on the server that receives an audio file and interacts with Google Cloud speech to text API and it gives a transcript for audio messages.

- Online Status Handler: A listener that listens to the online status of users and updates the databse accordingly Notification Model: A service that send notification to other users.

- AlgoliaSaver: A listener that listens to new users on our database and updates algolia accordingly so we can use it for the search feature on the frontend.

- Server Side Controllers: CallServer: an API endpoint that contains callModel Worker: a worker service that runs all our firebase handling services.

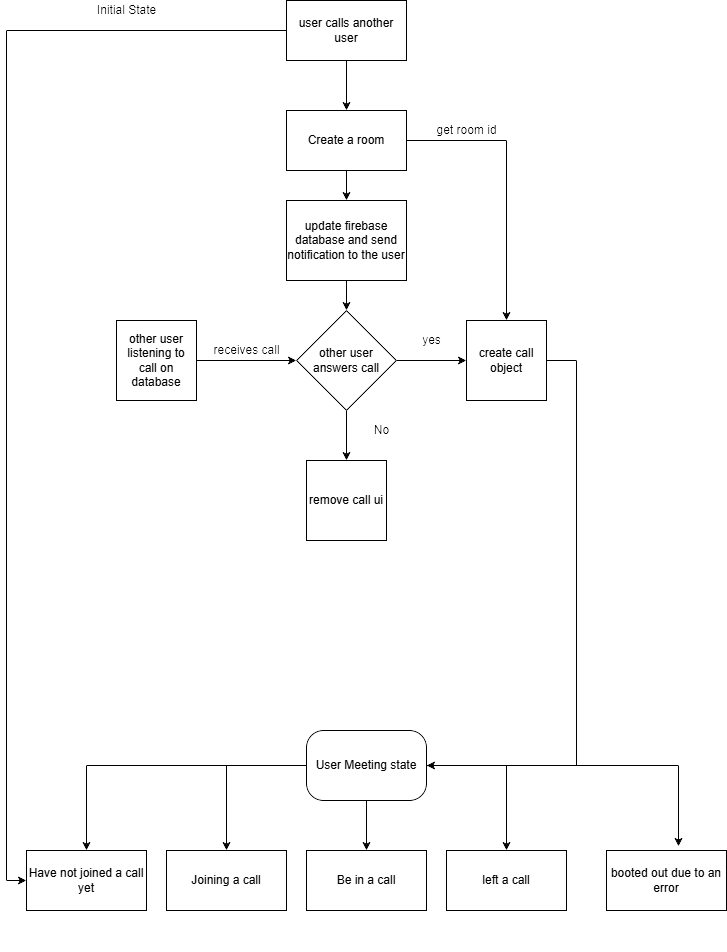

Chat Flow Chart

\

Sidebar Flow Chart

Model Call Flow Chart:

View Call Flow Chart

Backend Worker Flow Chart

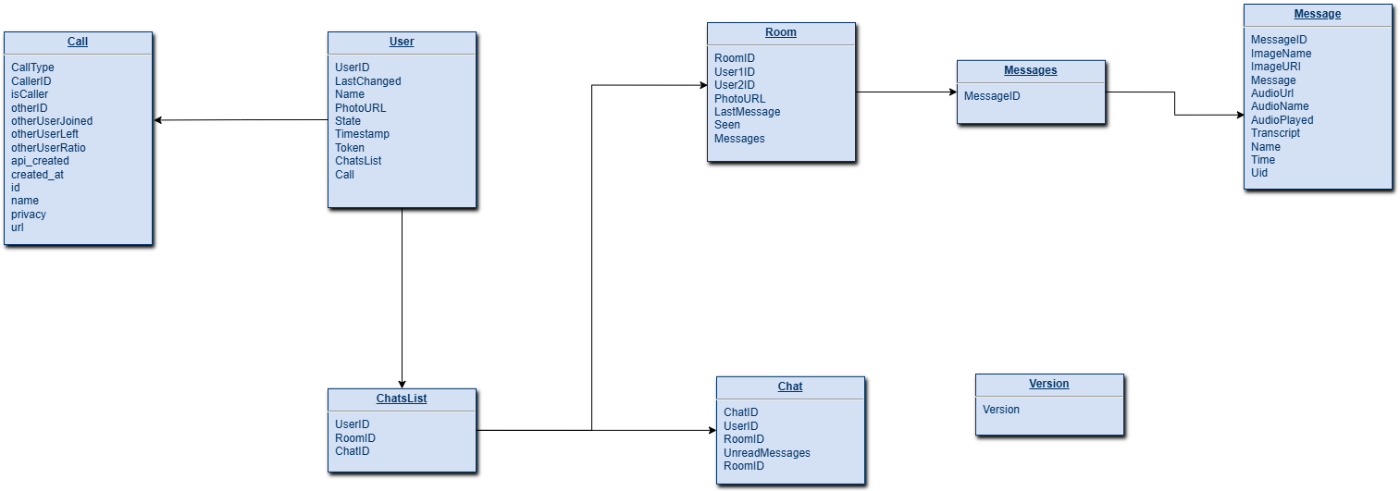

Database Design

Our Web app uses Firestore for storing our database which is a Firebase NoSql database, we store users information, we store a list of all messages, we store list of chats, and we also store chats on rooms, these are the data stored on our database:

Users Data after Authentication.

Rooms that contain all the details of messages.

List of latest chats for each user.

List of notifications to be sent.

List of audio to be transcripted.

Explanations of Magical Code 🔮

In the next chapters I’m going to give a quick explanation and tutorials about certain functionalities in ChatPlus, I’m going to show you the JS code and explain the algorithm behind it and also provide with the right integration tool to link your code with the database.

Handling Online Status

Online status of users was implemented by using firebase database connectivity feature by connecting to the “.info/connected“ on the frontend and updating both firestore and database accordingly:

var disconnectRef;

function setOnlineStatus(uid) {

try {

console.log("setting up online status");

const isOfflineForDatabase = {

state: 'offline',

last_changed: createTimestamp2,

id: uid,

};

const isOnlineForDatabase = {

state: 'online',

last_changed: createTimestamp2,

id: uid

};

const userStatusFirestoreRef = db.collection("users").doc(uid);

const userStatusDatabaseRef = db2.ref('/status/' + uid);

// Firestore uses a different server timestamp value, so we'll

// create two more constants for Firestore state.

const isOfflineForFirestore = {

state: 'offline',

last_changed: createTimestamp(),

};

const isOnlineForFirestore = {

state: 'online',

last_changed: createTimestamp(),

};

disconnectRef = db2.ref('.info/connected').on('value', function (snapshot) {

console.log("listening to database connected info")

if (snapshot.val() === false) {

// Instead of simply returning, we'll also set Firestore's state

// to 'offline'. This ensures that our Firestore cache is aware

// of the switch to 'offline.'

userStatusFirestoreRef.set(isOfflineForFirestore, { merge: true });

return;

};

userStatusDatabaseRef.onDisconnect().set(isOfflineForDatabase).then(function () {

userStatusDatabaseRef.set(isOnlineForDatabase);

// We'll also add Firestore set here for when we come online.

userStatusFirestoreRef.set(isOnlineForFirestore, { merge: true });

});

});

} catch (error) {

console.log("error setting onlins status: ", error);

}

};

And on our backend we also set up a listener that listens to changes on our database and update firestore accordingly, this function can also give us online status of the user on real time so we can run other functions inside it as well:

async function handleOnlineStatus(data, event) {

try {

console.log("setting online status with event: ", event);

// Get the data written to Realtime Database

const eventStatus = data.val();

// Then use other event data to create a reference to the

// corresponding Firestore document.

const userStatusFirestoreRef = db.doc(`users/${eventStatus.id}`);

// It is likely that the Realtime Database change that triggered

// this event has already been overwritten by a fast change in

// online / offline status, so we'll re-read the current data

// and compare the timestamps.

const statusSnapshot = await data.ref.once('value');

const status = statusSnapshot.val();

// If the current timestamp for this data is newer than

// the data that triggered this event, we exit this function.

if (eventStatus.state === "online") {

console.log("event status: ", eventStatus)

console.log("status: ", status)

}

if (status.last_changed <= eventStatus.last_changed) {

// Otherwise, we convert the last_changed field to a Date

eventStatus.last_changed = new Date(eventStatus.last_changed);

//handle the call delete

handleCallDelete(eventStatus);

// ... and write it to Firestore.

await userStatusFirestoreRef.set(eventStatus, { merge: true });

console.log("user: " + eventStatus.id + " online status was succesfully updated with data: " + eventStatus.state);

} else {

console.log("next status timestamp is newer for user: ", eventStatus.id);

}

} catch (error) {

console.log("handle online status crashed with error :", error)

}

}

Notifications

Notifications are a great a feature and they are implemented using firebase messaging, on our frontend if the user’s browser supports notifications then we configure it and retrieve the user’s firebase messaging token:

const configureNotif = (docID) => {

messaging.getToken().then((token) => {

console.log(token);

db.collection("users").doc(docID).set({

token: token

}, { merge: true })

}).catch(e => {

console.log(e.message);

db.collection("users").doc(docID).set({

token: ""

}, { merge: true });

});

}

whenever a user sends a message, we add a notification to our database:

db.collection("notifications").add({

userID: user.uid,

title: user.displayName,

body: inputText,

photoURL: user.photoURL,

token: token,

});

\ and on our backend we listen to the notifications collection and we use the firebase messaging to send it to the user

let listening = false;

db.collection("notifications").onSnapshot(snap => {

if (!listening) {

console.log("listening for notifications...");

listening = true;

}

const docs = snap.docChanges();

if (docs.length > 0) {

docs.forEach(async change => {

if (change.type === "added") {

const data = change.doc.data();

if (data) {

const message = {

data: data,

token: data.token

};

await db.collection("notifications").doc(change.doc.id).delete();

try {

const response = await messaging.send(message);

console.log("notification successfully sent :", data);

} catch (error) {

console.log("error sending notification ", error);

};

};

};

});

};

});

AI Audio Transcription

Our web application allows users to send audio messages to each other, and one of its features is the ability to convert this audio to a text for audio recorded in English, French and Spanish and this feature was implemented with Google Cloud Speech to Text feature, Our backend listens to new transcripts added to Firestore and transcripts them then writes them into the database:

db.collection("transcripts").onSnapshot(snap => {

const docs = snap.docChanges();

if (docs.length > 0) {

docs.forEach(async change => {

if (change.type === "added") {

const data = change.doc.data();

if (data) {

db.collection("transcripts").doc(change.doc.id).delete();

try {

const text = await textToAudio(data.audioName, data.short, data.supportWebM);

const roomRef = db.collection("rooms").doc(data.roomID).collection("messages").doc(data.messageID);

db.runTransaction(async transaction => {

const roomDoc = await transaction.get(roomRef);

if (roomDoc.exists && !roomDoc.data()?.delete) {

transaction.update(roomRef, {

transcript: text

});

console.log("transcript added with text: ", text);

return;

} else {

console.log("room is deleted");

return;

}

})

db.collection("rooms").doc(data.roomID).collection("messages").doc(data.messageID).update({

transcript: text

});

} catch (error) {

console.log("error transcripting audio: ", error);

};

};

};

});

};

});

Obviously, your eyes are looking at that textToAudio function and you’re wondering how I made it, don’t worry I got you:

// Imports the Google Cloud client library

const speech = require('@google-cloud/speech').v1p1beta1;

const { gcsUriLink } = require("./configKeys")

// Creates a client

const client = new speech.SpeechClient({ keyFilename: "./audio_transcript.json" });

async function textToAudio(audioName, isShort) {

// The path to the remote LINEAR16 file

const gcsUri = gcsUriLink + "/audios/" + audioName;

// The audio file's encoding, sample rate in hertz, and BCP-47 language code

const audio = {

uri: gcsUri,

};

const config = {

encoding: "MP3",

sampleRateHertz: 48000,

languageCode: 'en-US',

alternativeLanguageCodes: ['es-ES', 'fr-FR']

};

console.log("audio config: ", config);

const request = {

audio: audio,

config: config,

};

// Detects speech in the audio file

if (isShort) {

const [response] = await client.recognize(request);

return response.results.map(result => result.alternatives[0].transcript).join('\n');

}

const [operation] = await client.longRunningRecognize(request);

const [response] = await operation.promise().catch(e => console.log("response promise error: ", e));

return response.results.map(result => result.alternatives[0].transcript).join('\n');

};

module.exports = textToAudio;

Video Call Feature

Our web app uses Daily API to implement real time Web RTC connections, it allows users to video call to each other so first we setup a backend call server that has many api entry points to create and delete rooms in Daily:

const app = express();

app.use(cors());

app.use(express.json());

app.delete("/delete-call", async (req, res) => {

console.log("delete call data: ", req.body);

deleteCallFromUser(req.body.id1);

deleteCallFromUser(req.body.id2);

try {

fetch("https://api.daily.co/v1/rooms/" + req.body.roomName, {

headers: {

Authorization: `Bearer ${dailyApiKey}`,

"Content-Type": "application/json"

},

method: "DELETE"

});

} catch(e) {

console.log("error deleting room for call delete!!");

console.log(e);

}

res.status(200).send("delete-call success !!");

});

app.post("/create-room/:roomName", async (req, res) => {

var room = await fetch("https://api.daily.co/v1/rooms/", {

headers: {

Authorization: `Bearer ${dailyApiKey}`,

"Content-Type": "application/json"

},

method: "POST",

body: JSON.stringify({

name: req.params.roomName

})

});

room = await room.json();

console.log(room);

res.json(room);

});

app.delete("/delete-room/:roomName", async (req, res) => {

var deleteResponse = await fetch("https://api.daily.co/v1/rooms/" + req.params.roomName, {

headers: {

Authorization: `Bearer ${dailyApiKey}`,

"Content-Type": "application/json"

},

method: "DELETE"

});

deleteResponse = await deleteResponse.json();

console.log(deleteResponse);

res.json(deleteResponse);

})

app.listen(process.env.PORT || 7000, () => {

console.log("call server is running");

});

const deleteCallFromUser = userID => db.collection("users").doc(userID).collection("call").doc("call").delete();

and on our frontend we got multiple functions to call this API:

import { callAPI as api } from "../../configKeys";

//const api = "http://localhost:7000"

export async function createRoom(roomName) {

var room = await fetch(`${api}/create-room/${roomName}`, {

method: "POST",

});

room = await room.json();

console.log(room);

return room;

}

export async function deleteRoom(roomName) {

var deletedRoom = await fetch(`${api}/delete-room/${roomName}`, {

method: "DELETE",

});

deletedRoom = await deletedRoom.json();

console.log(deletedRoom);

console.log("deleted");

};

export function deleteCall() {

window.callDelete && fetch(`${api}/delete-call`, {

method: "DELETE",

headers: {

"Content-Type": "application/json"

},

body: JSON.stringify(window.callDelete)

});

};

Great, now it’s just time to create call rooms and use the daily JS SDK to connect to these rooms and send and receive data from them:

export default async function startVideoCall(dispatch, receiverQuery, userQuery, id, otherID, userName, otherUserName, sendNotif, userPhoto, otherPhoto, audio) {

var room = null;

const call = new DailyIframe.createCallObject();

const roomName = nanoid();

window.callDelete = {

id1: id,

id2: otherID,

roomName

}

dispatch({ type: "set_other_user_name", otherUserName });

console.log("audio: ", audio);

if (audio) {

dispatch({ type: "set_other_user_photo", photo: otherPhoto });

dispatch({ type: "set_call_type", callType: "audio" });

} else {

dispatch({ type: "set_other_user_photo", photo: null });

dispatch({ type: "set_call_type", callType: "video" });

}

dispatch({ type: "set_caller", caller: true });

dispatch({ type: "set_call", call });

dispatch({ type: "set_call_state", callState: "state_creating" });

try {

room = await createRoom(roomName);

console.log("created room: ", room);

dispatch({ type: "set_call_room", callRoom: room });

} catch (error) {

room = null;

console.log('Error creating room', error);

await call.destroy();

dispatch({ type: "set_call_room", callRoom: null });

dispatch({ type: "set_call", call: null });

dispatch({ type: "set_call_state", callState: "state_idle" });

window.callDelete = null;

//destroy the call object;

};

if (room) {

dispatch({ type: "set_call_state", callState: "state_joining" });

dispatch({ type: "set_call_queries", callQueries: { userQuery, receiverQuery } });

try {

await db.runTransaction(async transaction => {

console.log("runing transaction");

var userData = (await transaction.get(receiverQuery)).data();

//console.log("user data: ", userData);

if (!userData || !userData?.callerID || userData?.otherUserLeft) {

console.log("runing set");

transaction.set(receiverQuery, {

room,

callType: audio ? "audio" : "video",

isCaller: false,

otherUserLeft: false,

callerID: id,

otherID,

otherUserName: userName,

otherUserRatio: window.screen.width / window.screen.height,

photo: audio ? userPhoto : ""

});

transaction.set(userQuery, {

room,

callType: audio ? "audio" : "video",

isCaller: true,

otherUserLeft: false,

otherUserJoined: false,

callerID: id,

otherID

});

} else {

console.log('transaction failed');

throw userData;

}

});

if (sendNotif) {

sendNotif();

const notifTimeout = setInterval(() => {

sendNotif();

}, 1500);

dispatch({ type: "set_notif_tiemout", notifTimeout });

}

call.join({ url: room.url, videoSource: !audio });

} catch (userData) {

//delete the room we made

deleteRoom(roomName);

await call.destroy();

if (userData.otherID === id) {

console.log("you and the other user are calling each other at the same time");

joinCall(dispatch, receiverQuery, userQuery, userData.room, userName, audio ? userPhoto : "", userData.callType);

} else {

console.log("other user already in a call");

dispatch({ type: "set_call_room", callRoom: null });

dispatch({ type: "set_call", call: null });

dispatch({ type: "set_call_state", callState: "state_otherUser_calling" });

}

};

};

};

OtherUserQuery and UserQuery are just firebase firestore document paths, now the rest of the app has view components that reacts to the state changes that are triggered by this function above and our call UI elements will appear accordingly.

Move Around the Call Element:

This next function is the Magic that allows you to drag the Call element all over the page:

export function dragElement(elmnt, page) {

var pos1 = 0, pos2 = 0, pos3 = 0, pos4 = 0, top, left, prevTop = 0, prevLeft = 0, x, y, maxTop, maxLeft;

const widthRatio = page.width / window.innerWidth;

const heightRatio = page.height / window.innerHeight;

//clear element's mouse listeners

closeDragElement();

// setthe listener

elmnt.addEventListener("mousedown", dragMouseDown);

elmnt.addEventListener("touchstart", dragMouseDown, { passive: false });

function dragMouseDown(e) {

e = e || window.event;

// get the mouse cursor position at startup:

if (e.type === "touchstart") {

if (typeof(e.target.className) === "string") {

if (!e.target.className.includes("btn")) {

e.preventDefault();

}

} else if (!typeof(e.target.className) === "function") {

e.stopPropagation();

}

pos3 = e.touches[0].clientX * widthRatio;

pos4 = e.touches[0].clientY * heightRatio;

} else {

e.preventDefault();

pos3 = e.clientX * widthRatio;

pos4 = e.clientY * heightRatio;

};

maxTop = elmnt.offsetParent.offsetHeight - elmnt.offsetHeight;

maxLeft = elmnt.offsetParent.offsetWidth - elmnt.offsetWidth;

document.addEventListener("mouseup", closeDragElement);

document.addEventListener("touchend", closeDragElement, { passive: false });

// call a function whenever the cursor moves:

document.addEventListener("mousemove", elementDrag);

document.addEventListener("touchmove", elementDrag, { passive: false });

}

function elementDrag(e) {

e = e || window.event;

e.preventDefault();

// calculate the new cursor position:

if (e.type === "touchmove") {

x = e.touches[0].clientX * widthRatio;

y = e.touches[0].clientY * heightRatio;

} else {

e.preventDefault();

x = e.clientX * widthRatio;

y = e.clientY * heightRatio;

};

pos1 = pos3 - x;

pos2 = pos4 - y;

pos3 = x

pos4 = y;

// set the element's new position:

top = elmnt.offsetTop - pos2;

left = elmnt.offsetLeft - pos1;

//prevent the element from overflowing the viewport

if (top >= 0 && top <= maxTop) {

elmnt.style.top = top + "px";

} else if ((top > maxTop && pos4 < prevTop) || (top < 0 && pos4 > prevTop)) {

elmnt.style.top = top + "px";

};

if (left >= 0 && left <= maxLeft) {

elmnt.style.left = left + "px";

} else if ((left > maxLeft && pos3 < prevLeft) || (left < 0 && pos3 > prevLeft)) {

elmnt.style.left = left + "px";

};

prevTop = y; prevLeft = x;

}

function closeDragElement() {

// stop moving when mouse button is released:

document.removeEventListener("mouseup", closeDragElement);

document.removeEventListener("touchend", closeDragElement);

document.removeEventListener("mousemove", elementDrag);

document.removeEventListener("touchmove", elementDrag);

};

return function() {

elmnt.removeEventListener("mousedown", dragMouseDown);

elmnt.removeEventListener("touchstart", dragMouseDown);

closeDragElement();

};

};

Drag and Drop Images

You can drag and drop images on your chat and send them to the other user, this functionality is made possible by running this:

useEffect(() => {

const dropArea = document.querySelector(".chat");

['dragenter', 'dragover', 'dragleave', 'drop'].forEach(eventName => {

dropArea.addEventListener(eventName, e => {

e.preventDefault();

e.stopPropagation();

}, false);

});

['dragenter', 'dragover'].forEach(eventName => {

dropArea.addEventListener(eventName, () => setShowDrag(true), false)

});

['dragleave', 'drop'].forEach(eventName => {

dropArea.addEventListener(eventName, () => setShowDrag(false), false)

});

dropArea.addEventListener('drop', e => {

if (window.navigator.onLine) {

if (e.dataTransfer?.files) {

const dropedFile = e.dataTransfer.files;

console.log("dropped file: ", dropedFile);

const { imageFiles, imagesSrc } = mediaIndexer(dropedFile);

setSRC(prevImages => [...prevImages, ...imagesSrc]);

setImage(prevFiles => [...prevFiles, ...imageFiles]);

setIsMedia("images_dropped");

};

};

}, false);

}, []);

The mediaIndexer is a simple function that indexes the blob of images that we provide to it:

function mediaIndexer(files) {

const imagesSrc = [];

const filesArray = Array.from(files);

filesArray.forEach((file, index) => {

imagesSrc[index] = URL.createObjectURL(file);

});

return { imagesSrc, imageFiles: filesArray };

}

\

This content originally appeared on HackerNoon and was authored by Alaa Eddine Boubekeur

Alaa Eddine Boubekeur | Sciencx (2024-07-10T14:53:12+00:00) Building ChatPlus: The Open Source PWA That Feels Like a Mobile App. Retrieved from https://www.scien.cx/2024/07/10/building-chatplus-the-open-source-pwa-that-feels-like-a-mobile-app/

Please log in to upload a file.

There are no updates yet.

Click the Upload button above to add an update.