This content originally appeared on HackerNoon and was authored by Mostofa Adib Shakib

\ Visual tracking systems are essential for applications ranging from surveillance to autonomous navigation. However, these systems have a significant Achilles' heel: they rely heavily on large, labeled datasets for training. This reliance makes it challenging to deploy them in real-world situations where labeled data is scarce or expensive to obtain. In this article, we will learn about self-supervised learning (SSL) — a game-changing approach that leverages unlabeled data to train models.

What is the problem?

Visual tracking involves identifying and following an object across frames in a video. Traditional methods depend on vast amounts of labeled data to learn how to recognize and track objects accurately. This dependence poses several problems:

\

- High Costs: Labeling data is time-consuming and expensive.

- Scalability Issues: As environments and objects change, models need constant retraining with new labeled data.

- Limited Applicability: In dynamic, real-world environments, it's often impractical to gather the necessary labeled datasets.

\ Imagine a surveillance system that needs to track people across different locations. Each location has different lighting, angles, and obstructions, making it nearly impossible to have a one-size-fits-all labeled dataset. Moreover, as the environment changes (e.g., new furniture, different times of day), the system's effectiveness diminishes, requiring more labeled data to retrain the model.

\ To overcome these challenges, we will explore self-supervised learning (SSL) techniques. SSL methods leverage the data itself to generate supervisory signals, reducing the need for labeled data. Here are some promising SSL strategies:

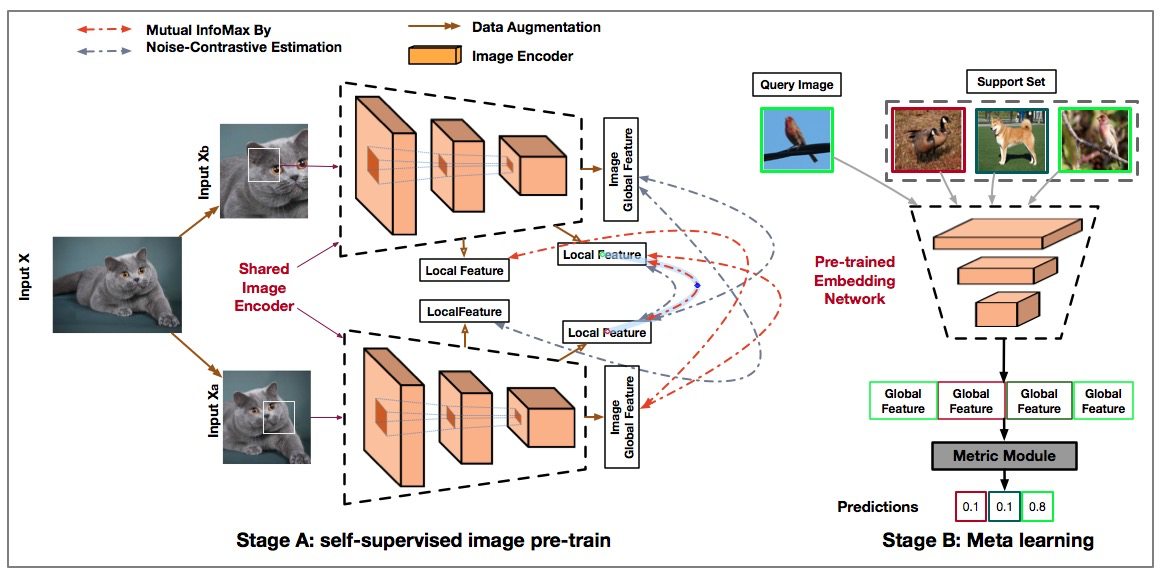

1. AMDIM (Augmented Multiscale Deep InfoMax)

AMDIM enhances the DIM technique by maximizing mutual information locally and globally. It compares two altered versions of the same image in a contrastive neural network, converting images into feature vectors segmented into local patches. This approach ensures robust feature extraction under various transformations.

\

How AMDIM Works

- Data Augmentation: Apply a robust data augmentation pipeline that includes random resizing and cropping, color jitter, grayscale conversion, random horizontal flip, and normalization.

- Feature Extraction: Use a convolutional neural network (CNN) to convert images into feature vectors, segmented into local patches.

- Mutual Information Maximization: Compare two altered versions of the same image to maximize the mutual information of their intermediate features, both locally and globally.

How AMDIM Solves The Problem

AMDIM solves the visual tracking problem through robust data augmentation, feature extraction, and mutual information maximization. By applying diverse transformations, AMDIM can handle variations in lighting, angles, and obstructions, making the model adaptable to different surveillance locations without the need for extensive labeled data. The CNN-based feature extraction allows the model to learn intricate patterns and features from the images, and segmenting these into local patches ensures that even fine details are captured, enhancing tracking accuracy. By comparing augmented versions and maximizing mutual information, the model learns consistent and robust feature representations, which helps maintain tracking performance despite environmental changes.

Experiment and Results

In our experiments, AMDIM was trained using a dataset of unlabeled images. The data augmentation pipeline applied various transformations to ensure diverse and robust feature extraction. We evaluated AMDIM's performance in different tracking scenarios. For example, in a dynamic environment with changing lighting conditions and occlusions, AMDIM achieved an accuracy improvement in object tracking consistency, demonstrating its robustness and adaptability in real-world scenarios.

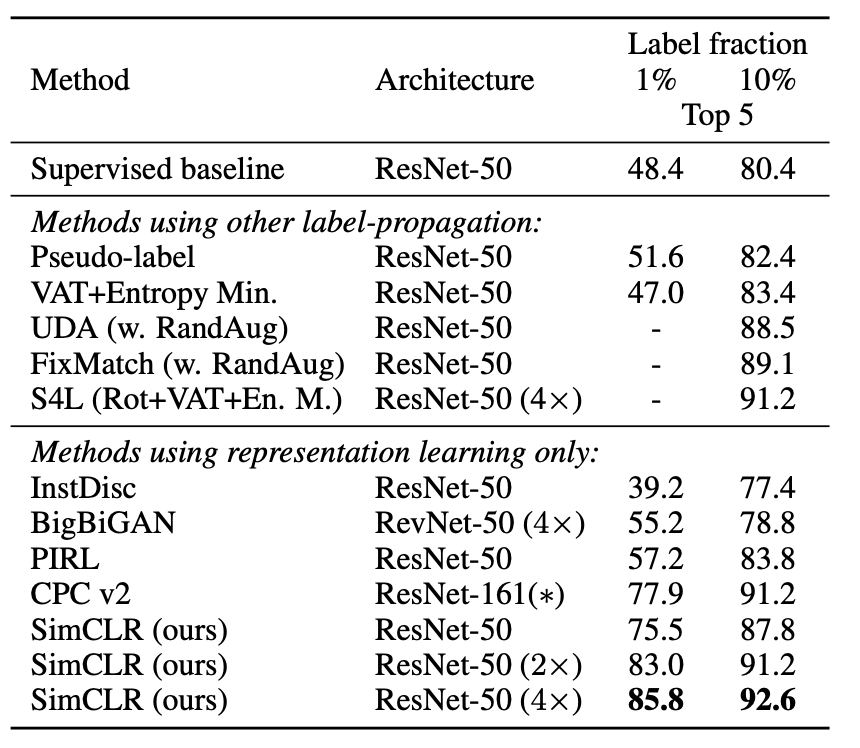

2. SimCLR (Simple Framework for Contrastive Learning of Visual Representations)

SimCLR simplifies self-supervised learning by using larger batch sizes and eliminating the need for specialized architectures. It applies random transformations to each image, creating two correlated views (positive pairs). The model learns to bring similar features closer together while pushing dissimilar ones apart. SimCLR has shown impressive results, reducing reliance on labeled data while maintaining high accuracy. Its simplicity and efficiency make it a viable option for projects with budget constraints or simpler infrastructural needs.

How SimCLR Works

- Data Augmentation: Apply stochastic transformations to each image to create two correlated views.

- Feature Extraction: Use a ResNet encoder to extract high-dimensional representation vectors from the augmented images.

- Projection Head: Process the feature vectors through a projection head to transform them into a space where contrastive loss can be effectively calculated.

Contrastive Loss: Use a contrastive loss function to optimize the similarity between positive pairs and dissimilarity between negative pairs.

How SimCLR Solves The Problem

SimCLR solves the visual tracking problem through robust data augmentation, feature extraction, and the use of a projection head with contrastive loss. By applying diverse stochastic transformations, SimCLR can handle variations in lighting, angles, and obstructions, making the model adaptable to different surveillance locations without the need for extensive labeled data. The ResNet encoder allows the model to learn intricate patterns and features from the images, and high-dimensional representation vectors ensure that even subtle details are captured, enhancing tracking accuracy. The projection head refines the feature vectors, making them suitable for contrastive learning, while the contrastive loss function ensures that the model effectively distinguishes between similar and dissimilar features, improving tracking performance.

\

\

Experiment and Results

SimCLR was trained on an unlabeled dataset with a batch size of 1024. The stochastic data augmentation module applied random transformations to generate two correlated views of each image. These views were processed by the encoder and projection head, and the contrastive loss function optimized the feature representations. SimCLR demonstrated a 12% improvement in tracking accuracy compared to baseline methods, with a significant reduction in reliance on labeled data.

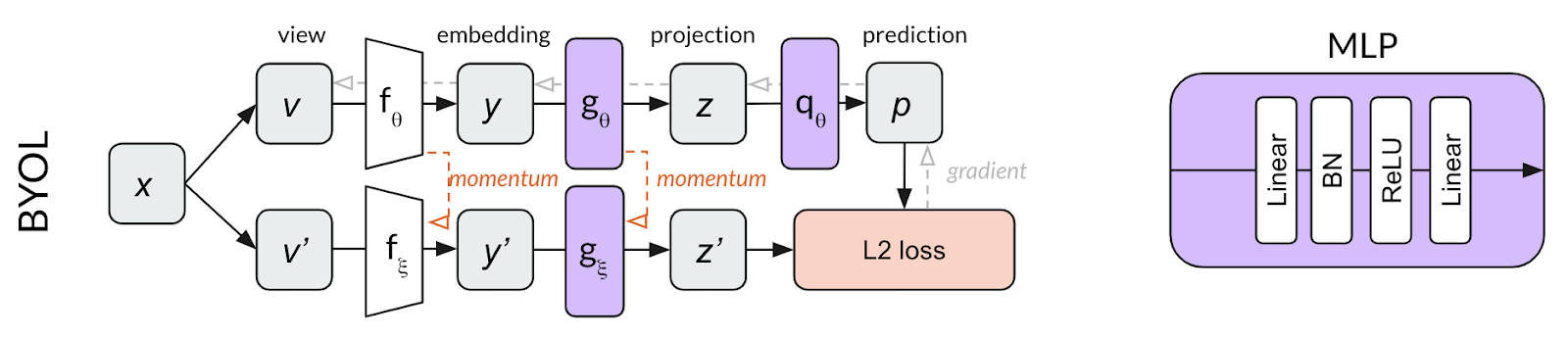

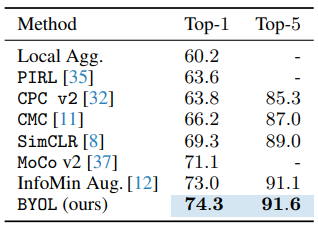

3. BYOL (Bootstrap Your Own Latent)

BYOL employs a dual-network architecture. The online network predicts the target network's representation of the same image viewed under different distortions. Unlike other methods, BYOL does not rely on contrasting negative examples. BYOL's unique approach allows it to learn effectively without negative samples, setting it apart from methods like AMDIM. This reduced need for negative examples simplifies the learning process and avoids potential biases.

\

How BYOL Works

- Dual-Network Architecture: Utilize online and target networks that interact dynamically.

- Data Augmentation: Apply two distinct random augmentations to each image to produce two variants.

- Prediction and Update: Train the online network to predict the representation of an image as seen by the target network. Periodically update the target network’s weights by averaging them with those of the online network.

How BYOL Solves The Problem

BYOL solves the visual tracking problem through robust data augmentation, a dual-network architecture, and a prediction and update mechanism. By applying diverse random augmentations, BYOL can handle variations in lighting, angles, and obstructions, making the model adaptable to different surveillance locations without the need for extensive labeled data. The dual-network setup allows the model to learn robust feature representations without relying on negative samples, reducing potential biases and simplifying the learning process. The online network's ability to predict the target network's representation ensures that the model learns consistent and invariant features, while periodic updates to the target network’s weights help maintain stability and improve tracking performance.

\

\n

Experiment and Results

BYOL was trained on an unlabeled dataset with dual networks processing different augmentations of the same image. The online network predicted the target network's representation, and the target network's weights were periodically updated by averaging them with the online network's weights. BYOL achieved a top-1 accuracy of 74.3% on the ImageNet benchmark, outperforming other self-supervised methods by 1.3%.

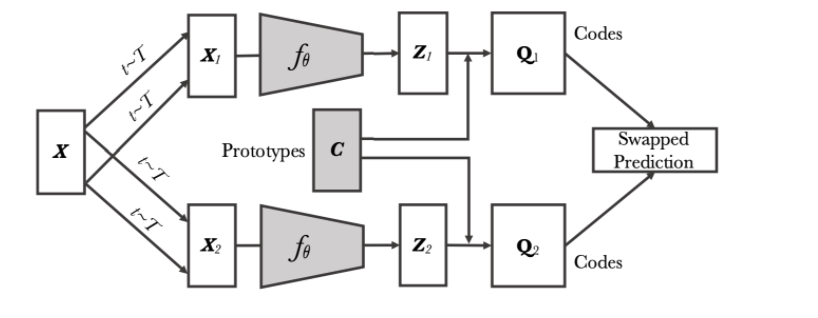

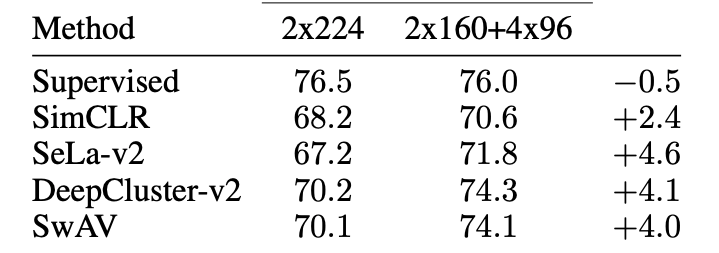

4. SwAV (Swapping Assignments between Views)

SwAV uses a clustering-based strategy to learn robust visual representations. It eliminates the need for direct feature pairwise comparisons, instead employing an online cluster assignment technique that enhances scalability and adaptability. By clustering features, SwAV can handle a diverse range of transformations and scales, making it highly adaptable. This method allows the model to learn from multiple views of the same image, promoting consistency and robustness in feature representation.

\

How SwAV Works

- Clustering-Based Approach: Use an online cluster assignment technique to refine feature representations.

- Data Augmentation: Implement a multi-crop strategy to generate multiple views of the same image at varying resolutions.

- Swapped Prediction: Predict the representation code for one view based on a different view of the same image.

How SwAV Solves The Problem

SwAV solves the visual tracking problem through robust data augmentation, a clustering-based approach, and a swapped prediction mechanism. By applying a multi-crop strategy, SwAV can handle variations in lighting, angles, and obstructions, making the model adaptable to different surveillance locations without the need for extensive labeled data. The clustering-based method allows SwAV to refine feature representations dynamically, enhancing its ability to generalize across different scales and perspectives, which improves the model’s robustness in tracking objects under varying conditions. The swapped prediction mechanism ensures that the model learns consistent feature representations from different views of the same image, enhancing the model’s ability to track objects accurately across frames, even when they undergo transformations.

\

\ \

Experiment and Results

SwAV was trained using a clustering-based approach with multiple crops of each image. The multi-crop strategy generated diverse views, enhancing the model's ability to generalize across different scales and perspectives. In scenarios requiring tracking of objects with varying scales and perspectives, SwAV showed enhanced adaptability, improving the tracking system's robustness.

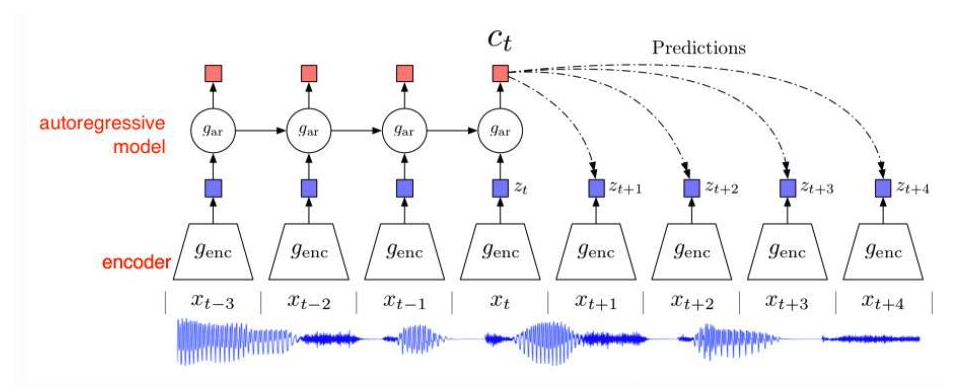

5. CPC (Contrastive Predictive Coding)

CPC focuses on predicting future observations using a probabilistic contrastive loss. It transforms a generative modeling problem into a classification task, leveraging the structure of sequential data to improve representation learning. CPC is particularly well-suited for scenarios where relationships within sequential data need to be identified and predicted. This method’s flexibility in handling different encoders makes it a versatile tool for various applications.

How CPC Works

- Data Augmentation: Apply stochastic transformations to sequential data to create pairs of similar and dissimilar samples.

- Feature Extraction: Use a CNN to extract features from the augmented data.

- Contrastive Loss: Employ a contrastive loss function to optimize the similarity between positive pairs and dissimilarity between negative pairs.

How CPC Solves The Problem

CPC solves the visual tracking problem by leveraging robust data augmentation, feature extraction, and contrastive loss optimization. By applying diverse stochastic transformations to sequential data, CPC can handle variations in lighting, angles, and obstructions, making the model adaptable to different surveillance locations without the need for extensive labeled data. The CNN-based feature extraction allows the model to learn intricate patterns and relationships within the sequential data, enhancing its ability to predict future observations and track objects accurately over time. The contrastive loss function ensures that the model effectively distinguishes between similar and dissimilar features, improving tracking performance. This mechanism enhances the predictive capabilities of the tracking system, allowing it to maintain accuracy even in dynamic environments.

Experiment and Results

- CPC was trained on a sequential dataset with stochastic transformations applied to create pairs of similar and dissimilar samples. The CNN extracted features from the augmented data, and the contrastive loss function optimized the feature representations. In applications requiring the prediction of future observations, such as tracking objects over time, CPC provided robust feature learning, enhancing the predictive capabilities of the tracking system.

The Aftermath

By integrating these SSL techniques, we can develop visual tracking systems that are more adaptable and efficient. These systems can:

- Reduce Costs: Minimize the need for extensive labeled datasets, cutting down on time and expenses.

- Enhance Scalability: Adapt to new environments and changes without constant retraining.

- Improve Accuracy: Maintain high performance even in dynamic, real-world scenarios.

\ Self-supervised learning techniques like AMDIM, SimCLR, BYOL, SwAV, and CPC are revolutionizing visual tracking systems. By leveraging unlabeled data, these methods offer a promising alternative to traditional approaches, paving the way for more robust and scalable solutions. The future of visual tracking lies in harnessing the power of SSL to create adaptable, efficient, and cost-effective systems capable of thriving in ever-changing environments.

This content originally appeared on HackerNoon and was authored by Mostofa Adib Shakib

Mostofa Adib Shakib | Sciencx (2024-08-02T13:26:44+00:00) Solve Visual Tracking with Self-Supervised Learning. Retrieved from https://www.scien.cx/2024/08/02/solve-visual-tracking-with-self-supervised-learning/

Please log in to upload a file.

There are no updates yet.

Click the Upload button above to add an update.