This content originally appeared on HackerNoon and was authored by DataStax

Interacting with databases often requires a level of technical expertise that can put data out of easy reach for a lot of people. Consider a finance executive who needs to understand the company’s financial numbers and trends. Traditionally, this executive would have to rely on SQL analysts to extract the necessary data from the database. This dependency can cause delays and communication gaps, especially if the executive needs to refine their queries multiple times to get the desired insights.

\ But text2SQL, a capability that converts natural language to structured query language statements, has changed the game. With text2SQL, the finance executive can directly interact with the database using natural language. For instance, a user can input a business-facing question such as "What was the average order value for each customer last month?"

\ The Text-to-SQL AI engine will process the question, and generate the corresponding SQL query:

\

Select customer_id, AVG(order_value) AS average_order_value FROM orders WHERE order_date >= DATE_SUB(CURRDATE(), INTERVAL 1 MONTH) GROUP BY customer_id;

\ Then execute it against the database and display the results to the user.

\ In this article, we'll explain the role of LLMs in text2SQL, discuss the challenges inherent in this capability, and explore SherloQ, a highly accurate and robust text2SQL engine developed by the Skypoint team.

The Role of LLMs in text2SQL

The ability to convert text2SQL has improved significantly with the help of large language models (LLMs). These models use vast amounts of data and powerful neural network architectures to understand and generate human-like text. By training on diverse datasets, LLMs can generalize across various tasks, including the translation of natural language into SQL queries.

\ For example, the paper "Language Models are Few-Shot Learners" demonstrates how LLMs can perform tasks with minimal examples, highlighting their ability to adapt to new tasks with limited data. This approach significantly reduces the need for extensive task-specific data, making it easier to deploy LLMs in various applications.

\ The "Spider: A Large-Scale Human-Labeled Dataset for Complex and Cross-Domain Semantic Parsing and Text-to-SQL Task" provides a comprehensive dataset for training and evaluating models on complex SQL queries across different domains. This dataset has been pivotal in advancing the state-of-the-art in text2SQL by providing a robust benchmark for model performance.

\ Additionally, "PALM: Scaling Language Modeling with Pathways," explores how advanced training techniques, such as scaling up model sizes and optimizing training pathways, can enhance model performance in various applications, including text2SQL.

\ While these LLMs are highly effective in controlled environments, they often face challenges in production settings. These include handling ambiguous prompts, managing complex database schemas, and ensuring real-time performance. Additionally, integrating these models into existing systems requires significant effort and ongoing maintenance to adapt to changing data and user requirements. Here are three LLMs that work well for this task:

SQLcoder

The main purpose of the SQLcoder is to convert natural language input to SQL queries. Unlike the other general-purpose models, SQLCoder has been refined on data specific to SQL, hence it is especially effective at understanding and generating SQL queries. SQLCoder demonstrates a notable performance on the Spider dataset, a complex, and cross-domain benchmark for text2SQL systems.

\ SQLCoder generates the correct SQL query for novel schemas not seen in training with an accuracy of 64.6%. It outperforms GPT-3.5-turbo and text-davinci-003, which are models more than 10x its size. This highlights SQLCoder's capability to handle diverse and intricate SQL queries, which is critical for production environments.

GPT 3.5/GPT 4

GPT-3.5 and GPT-4 (Generative Pre-trained Transformer) are some of the most advanced and effective general-purpose language models. Both models excel in few-shot learning, quickly adapting to new tasks with minimal examples, which is ideal for creating SQL queries from limited input.

\ For instance, when evaluated on the Spider dataset, GPT-3.5-turbo has a percentage of correctly generated SQL queries on novel schemas not seen in the training of 60.6%, while GPT-4 achieves 74.3%.

\ These models exhibit robust performance, particularly in understanding context and generating accurate SQL queries from complex natural language inputs. However, their general-purpose design sometimes requires additional fine-tuning for optimal results in specialized applications like SQL generation.

PaLM SQL

PaLM (Pathways Language Model) SQL is another powerful model developed by Google. PaLM SQL's advanced capabilities and architecture make it highly efficient in translating natural language into SQL queries and handling complex and diverse database schemas with greater accuracy.

\ Despite the advancements in LLMs, relying on a single LLM for text2SQL in production can be problematic. A single model will not be able to handle the wide variety of queries, database schemas, and real-time latency requirements of an enterprise data environment effectively.

\ Production environments demand robustness, adaptability, and the ability to deal with ambiguous prompts from real-world business users. Therefore, a text2SQL engine should exhibit three properties to be considered production-grade:

\ The ability to understand diverse query formulations - Syntactically different user prompts can be reduced to the same SQL query; a good text2SQL engine should be able to understand the motivation behind a user prompt, keeping the context of the data model in mind, and should formulate the SQL query accordingly.

\ The ability to work with ambiguous database schemas and data models - Production data models are prone to be chaotic with multiple different teams within an organization contributing to them and data changing ownership multiple times throughout its lifecycle.

\ A good text2SQL engine should have the ability to disambiguate the data model for the user and ensure that the noise and ambiguity do not lead to hallucinations, a characteristic of current LLMs that deter a lot of production users.

\ It should ensure that the workings of the engine do not add significant latencies to the execution of the query - Answers that a user expects in real-time should return in real-time. This means that the engine should formulate optimal queries within the first three tries at most.

Introducing SherloQ

SherloQ is Skypoint’s text2SQL engine that translates queries from natural language to SQL. While leveraging a custom in-house (LLM for query generation is a significant part of its architecture, SherloQ's effectiveness comes from a combination of advanced components designed to enhance data querying capabilities. SherloQ boasts high accuracy in query translation, robust error handling, and seamless integration with production database systems, making it suitable for large-scale data environments.

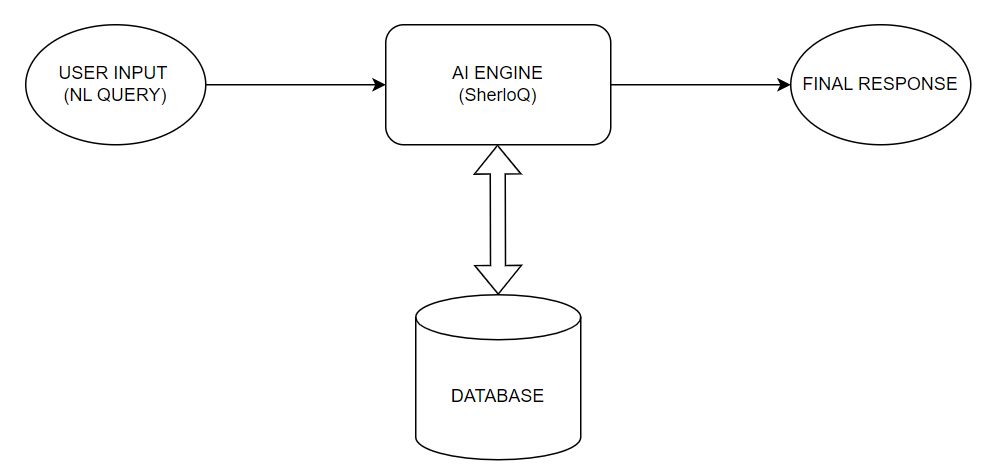

\

\ In the next few sections, we delve into the internal architectural details of SherloQ and share some of the results that we have achieved using it in production settings.

Architectural Components of SherloQ

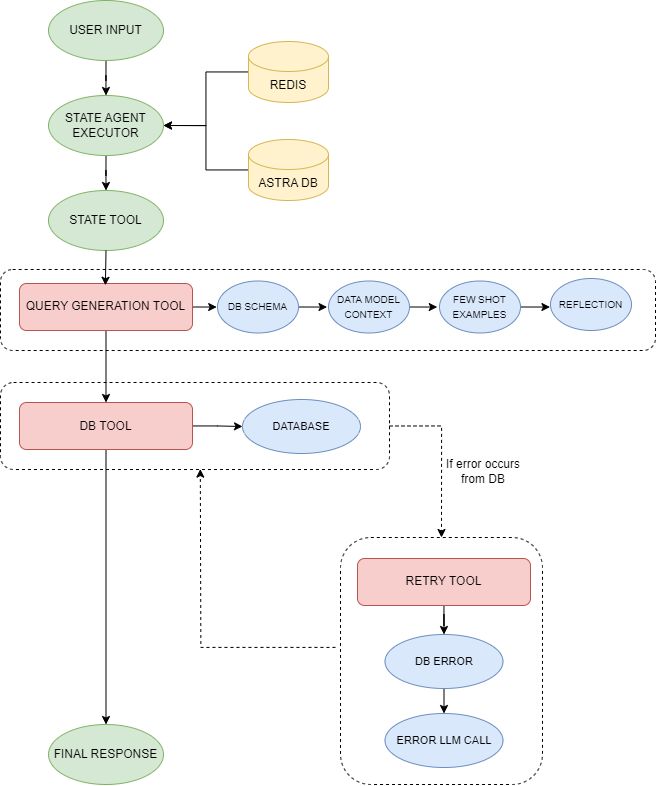

SherloQ's architecture contains multiple moving pieces that each work to improve the system's accuracy, reliability, and latency. Here's an overview of the architecture:

\

\ \ User input - The user input is a natural language query.

\ State agent Eeecutor- Our implementation of a LangChain interface that tracks the state throughout the execution process. It leverages Redis and DataStax Astra DB to track the state of reasoning and memory during execution. The executor manages the flow of operations by coordinating between different modules.

\ It ensures that user inputs are correctly parsed, processed, and forwarded to subsequent components, maintaining a flow from input to SQL query generation.

\ State tool - An extended class of the Langchain Base Tool that has a state variable and passes that state to the respective tool. By maintaining a state variable, the state tool keeps track of the necessary data that needs to be forwarded to the respective tools. This ensures consistency in the data flow, preventing any loss of information during the transition between agents.

\ The state tools of SherloQ:

- The query generation tool uses the retrieved few-shot queries, data model context, and DB schema to generate the initial SQL query. It enhances the accuracy and relevance of generated SQL queries by using parsed schema information and learning from contextual examples.

\

- The retry tool analyses any error thrown during query execution and regenerates a query that addresses the error.

\

- The DB tool helps fetch the database schema and corresponding metadata (annotations, comments, etc.) that would be helpful for the query generation. The retrieved schema information is cached using a time-based caching mechanism to optimize performance and reduce database queries. It also executes the query against the database and returns the results or an error stack trace.

Data Inputs and Techniques for Enhancing SherloQ’s Performance

To enhance SherloQ's performance, we employ several techniques and provide the model with important data inputs. These consist of structured decomposition, few-shot examples, data model context retrieval, reflection, and a retry mechanism. Each part is essential in improving the model's ability to produce precise and relevant SQL queries from natural language inputs.

Structured Decomposition

In structured decomposition, the user prompt is broken down into its fundamental parts. Focusing on and identifying the essential components of the query helps the model in producing accurate SQL. For example:

\ Input: What is the current quantity available for all items supplied by XYZ Corp?

Output: ["current quantity available", "all items", "supplied by XYZ Corp"]

Few-Shot Examples

The model is given reference questions in the form of a few shot SQL examples, which help it generate SQL queries based on similar patterns. These examples improve the model's ability to accurately generate new SQL queries for various prompts by helping it recognize the structure and format of the intended queries. Here are a few examples:

\ Example 1

Input: Show items with a quantity of less than 20 units available.

Output: SELECT * FROM stock WHERE Quantity_Available < 20;

\ Example 2

Input: List vendors with items priced above $100.

Output: SELECT * FROM vendors WHERE VendorID IN (SELECT VendorID FROM items WHERE Unit_Price > 100);

\ We dynamically select the most similar few shot examples using semantic similarity matching from Astra DB which is our vector database. The vector database allows us to find the examples that are closest in structure and content to the new input query, ensuring that the model can leverage the most relevant patterns to generate accurate SQL queries.

\ In our production environment, we average about two few-shot examples per query. In our experience, simply adding more few-shot examples to improve accuracy is not a scalable practice.

Data Model Context

Data model context contains domain-specific details that can be useful in creating an SQL query. For example, in the context of financial data for a hospital network, these can be things like finance metric codes and their descriptions. This context is used by the model to make sure that the SQL queries that are created match the data structure of the domain. The inclusion of data model context is optional and is required only when the table is complex and requires domain knowledge to form an SQL query.

\ For example:

Metric codes: " MGMTFEE " -> Description: "Fees collected for managing properties – Management Fees"

SQL DB Schema

The SQL DB schema is the structured representation of the available data. The schema is enriched by annotating both the table and its columns. It includes table names and descriptions, along with the columns, their descriptions, and data types.

\ Providing the schema helps the model understand the database structure and the meaning associated with each table and column, ensuring that the generated SQL queries are syntactically correct and utilize the correct database elements.

Reflection

Reflection refers to the model's ability to assess and evaluate its own past responses, behavior, or generated content. It allows the model to refine its responses by identifying and correcting errors or weaknesses. The process includes considering queries previously seen by the Sherloq engine along with feedback during the query generation phase.

\ These scenarios help the model to replicate the successes or avoid the failures of similar questions that it has seen in the past. This step also leverages Astra DB to find the most semantically similar queries to the current one.

Retry Mechanism

SherloQ incorporates a retry mechanism using the errors received from the database. When a generated SQL query results in an error, the engine uses an agent with a predefined template to correct the query:

\ “””

Your task is to fix an incorrect SQL query generated from a question to make it compatible with ANSI SQL. Follow these guidelines: Carefully analyze the question, database schema, and the error message received to ensure accurate answers. Utilize Table Aliases to avoid confusion.

\

For example, SELECT t1.col1, t2.col1 FROM table1 AS t1 JOIN table2 AS t2 ON t1.id = t2.id.

\ When calculating rations, always convert the numerator to a float. Use the below format:

Task:

Generate an SQL query to address the question

[QUESTION]{user_input}[/QUESTION]

The query to be correct is: {sqlquery}

The error message received during the last execution is: {errormessage}

Response:

Based on the above inputs, here is the corrected SQL query: {response_sql} “””

Comparing Results: Before and after SherloQ implementation

With the use of advanced LLMs and a well-designed system, SherloQ produces SQL queries that are more precise and relevant to the context. Here, we compare the results of user prompts before and after SherloQ using the metrics of latency and accuracy.

Comparison Using One Production Question

Here, we look at one of our production customers, a well-known senior living operator. One of their use cases is their finance team using Skypoint’s platform to understand and reason over the consolidated financial metrics of their different senior living facilities.

\ User prompt: "Determine the quarterly trend of Housekeeping Expenses for facility CM throughout 2022."

\ Before SherloQ

Generated query

SELECT SUM(TotalAmountNumerator) / SUM(TotalAmountDenominator) AS HousekeepingExpenses FROM skypointmetricfactdenormalizedvw WHERE FacilityName = 'CM' AND Year = 2022 AND MetricName = 'Housekeeping Expense’ GROUP BY QuarterNumber';

\

Issues identified

The query groups by Quarter_Number but does not select it, which can cause incomplete results.

Use of MetricName instead of a more specific identifier like MetricCode.

Potential division by zero is not handled in SUM(TotalAmountDenominator).

\

Average latency: 29 Seconds

The before-SherloQ setup used a combination of GPT-3.5 along with prompt engineering and 5+ few-shot queries per user prompt.

\ With SherloQ

Generated query

SELECT Year, QuarterNumber, SUM(TotalAmountNumerator) / COALESCE(SUM(NULLIF(TotalAmountDenominator,0)),1) AS HousekeepingExpenses FROM skypointmetricfactdenormalizedvwWHERE MetricCode = 'EXPOTHHOU' AND FacilityName = 'CM' AND Year = 2022 GROUP BY QuarterNumber, Year ORDER BY QuarterNumber ASC;

\

Improvements noted

The query includes Quarter_Number, providing the necessary quarterly breakdown.

The Metric_Code field is used, providing a more precise identifier for the metric.

The COALESCE(SUM(NULLIF(TotalAmountDenominator,0)),1) function handles potential division by zero errors.

The results are ordered by Quarter_Number to reflect the quarterly trend.

Average Latency: 10 seconds

\ Based on benchmarks on production workloads, below are results that we report on accuracy and reliability before and with SherloQ:

Before SherloQ

Accuracy: 65%

Reliability: 60%

\

With SherloQ

Accuracy: 92%

Reliability: 90%

\

The above results were drawn from an internal benchmark suite that executes every prompt 100 times with separate identifiers to negate the effects of caching (in our internal systems as well as the models). The suite measures accuracy by comparing the returned response against a benchmark response and reliability by measuring how often it would return similar responses.

\ The comparison clearly illustrates the advantages of SherloQ in transforming natural language queries into accurate SQL queries. Overall performance improved by 30% after SherloQ. The queries generated earlier suffered from issues such as incomplete results and a lack of error handling, impacting both accuracy and reliability.

\ With SherloQ, the generated queries are more precise, efficient, and robust, with notable improvements in latency, accuracy, and reliability. This enhancement shows SherloQ's capability to deliver dependable data retrieval, making it a valuable tool for organizations seeking to optimize their data querying processes.

\ To explore other parts of the Skypoint platform or to book a demo of SkyPoint AI, visit the Skypoint website.

\ By Alok Raj, Lead AI Engineer, Skypoint, and Sayandip Sarkar, Head of Engineering, Skypoint

This content originally appeared on HackerNoon and was authored by DataStax

DataStax | Sciencx (2024-08-13T00:56:16+00:00) How to Build a Production-Grade Text2SQL Engine. Retrieved from https://www.scien.cx/2024/08/13/how-to-build-a-production-grade-text2sql-engine/

Please log in to upload a file.

There are no updates yet.

Click the Upload button above to add an update.